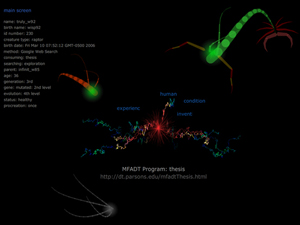

[CREATURES] (2006)

The project CREATURES builds lifeforms that grow, reproduce, mutate, and die on the Web

as they consume Web data and share it with other creatures that inhabit the space.

Creatures also use their blogs to communicate with other creatures and participants.

:: CREATURES @Peer Gallery, New York ::

we keep producing huge data flow and interactions on the Web everyday generating enormous energy

that sustains and evolves the Web without noticing it. It seems that the Web - as an organic system -

resembles our real world more and more since we are also serving our roles to sustain

the natural organic system without noticing it in our real lives. This project is to connect those two worlds

by simulating each other, and look for new aspects of the organic system.

The goals of this project are to create a believable life simulation using the Web space and its data,

allow users to interact with this simulation and produce user experiences that reflect our own life process,

and help users to broaden the understanding of Web searching techniques or genetic algorithms

through an analogous visualization.

The purpose of this project is to create a meaningful life simulation that allows people to see

the activities we do everyday on the Web in a different point of view, hopefully giving people a chance

to think about our lives in an organic system and the properties of life.

[Definition of Living Creature]

Each creature performs some properties of life:

it moves, grows, ages, dies, reproduce, mutates, evolves, and interacts

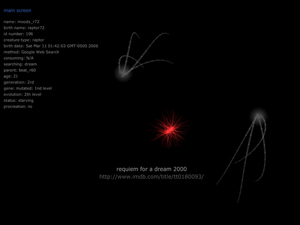

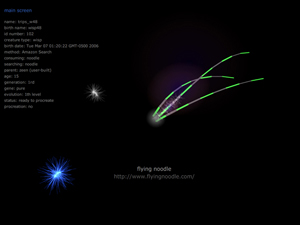

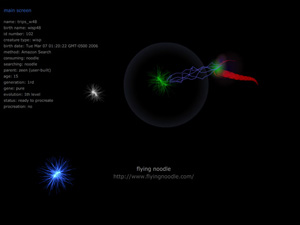

[Creature Types]

Currently two creature types are developed: wispType, and raptorType.

WispType creatures collect and analyze specific data from websites and blogs, while raptorType creatures

search and hunt the wispType creatures.

As raptorTypes cannot analyze data by themselves, they get analyzed data from other creatures they consume.

More creatures will be developed, including parasiteType, lurkerType, tyranoType.

[Creature Behaviors]

* Gathering Web Data

Each creature travels through websites, searching for the word given by the user, reading the snippets of the websites.

it stores all text data it reads on the server.

* Analyzing Data

Wisp-type creatures have abilities to analyze text data. They create database with text they read on webpages,

split text data into word.

* Growth

Creatures' appearances contiually change, and they keep expanding their text database by reading more websites.

After growing for a while, they choose their own name from the linking words they collected.

* Hunting Creatures (Raptor-types)

Raptor-type creatures chase and hunt wisp-type creatures.

They collect linking words from their preys and use those words to chase other creatures.

* Defending (Wisp-types)

Wisp-type creatures develop the abilities to evade/escape from raptors.

* Evolution

Creatures expand the linking words for their search, and make their Web searching & analyzing process more efficient

by expanding their ignoring-words lists. Through the evolution process, wispType creatures evolve their Web searching abilities,

and raptorType creatures develop their hunting abilities.

* Reproduction / Mutation

After growing enough, creatures make their children having the similar behavior patterns,

and they randomly make the children that search for new words which frequently appear during their search process.

Those children are tested if they can find the original word, and only the successful creatures survive

and keep continuing the next mutation process. Through this mutation process, the expansion of linking words can be achieved.

* Blogging / Sharing Data

Each creature type has its blog, and creatures share their data using the blog space.

Creatures occasionally post blog entries with their collected and analyzed texts, and they also post blog entries

when certain events happened, such as procretion, hunting.

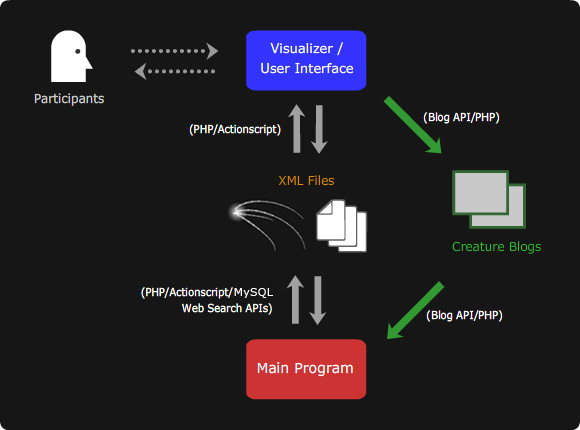

[System Structure: How It Works]

The project is mainly being developed in Flash Actionscript and PHP.

* Each creature exists as a group of XML files on the server, containing the attributes of creature's appearance,

locomotion, behavior patterns, and collected and analyzed data from the Web.

* The main program continually updates the data in those files as it processes Web searching, data analizing.

* Another program 'Visualizer' reads the data and visualizes it as lifeforms, and provides the user interface.

* And those two programs read and post blog entries.

[Working Demo]

* Creature's locomotion is supposed to be very smooth and natural in these demo programs.

If it is not, that's because these demos are temporary, and not optimized for Web browsers yet.

Thus, these demo programs may run slow. The speed can be vary based on the system speed.

* Flash Player 8 is required to run this demo

This is a SELF-RUNNING DEMO showing all creature behaviors, including the descriptions of the project.

Running 5 min.

[LAUNCH SELF-RUNNING DEMO]

And another demo, which is the visualizing program, shows how participants can browse & watch the creatures

currently living on the server, and build new creatures.

In this demo, the main program is not running on purpose in this demo.

Thus, creatures just stay in the same website, not moving to another website, not growing,

and not performing any other behaviors.

[LAUNCH MAIN USER INTERFACE DEMO]

And, this is a sample blogs that current creatures are posting entries.

[WISP BLOG]

[RAPTOR BLOG]